-

Docker-compose based Ansible alternative, written in Python. Misterio is able to manage a set of compose target as one, applying status changes easily. If you have stateless microservices, you will love Misterio.

Docker-compose based Ansible alternative, written in Python. Misterio is able to manage a set of compose target as one, applying status changes easily. If you have stateless microservices, you will love Misterio. -

A tool that weigh the soul of incoming HTTP requests using proof-of-work to stop AI crawler. A beautiful idea againsta AI crazy bots

A tool that weigh the soul of incoming HTTP requests using proof-of-work to stop AI crawler. A beautiful idea againsta AI crazy bots -

Good Karma Kit

The Good Karma Kit is “a Docker Compose project to run on servers with spare CPU, disk, and bandwidth.” I like the idea in principle, but it is always a complex thing to do, because if you host unknown content, you can get in trouble easily (like pirated content or worst…)

Read More -

Sometimes you need to create a lot of CronJobs in k8s. In particular, in my last project I need to create a lot of stupid “web hooks” to fire complex job execution. K8s is well suited for this task because it take care of launching a single job instance, and relaunch them in case of error.

Read More -

In the last projects, I get used to use K8s CronJob(s) to schedule tasks.

The most effective way of doing it, is to create a super-tiny cronjob, which the sole purpose is to call a REST webbook(s) of a specific microservice, to fire some action in a predictable way.

Read More -

Context: Spring microservice application to be deployed on K8s via helm + boring Friday

In this scenario, you end up writing the SAME configuration string in a lot of places:

- On at least 2 application.properties (main and test)

- On the final, helm-generated application properties (or in the relevant environment variable if you use them in place (1))

- On the default K8s values.yaml used by helm. Possibly on other yaml file too, all documented a bit to be kindly with the K8s SRE.

- On the relevant Java code, as a @Value annotation to finally use that damn config.

Read More -

K8s and limits

On K8s, for every pod you can define how much memory and CPU the pod needs. To make things "simpler", K8s define two set of values: requests and limits, both for CPU and memory. After some trouble on GCP, I was forced to dig a bit in the subject.

Read More -

A friend of mine asked some insight on how to harden a Gitea server on Internet. Gitea is a web application for manging git repositories.

Gitea is quite compact and is less feature-rich than GitLab, but it is light and can manage issues, wiki and users.

Read More -

Bullet points:

- 1979: Unix V7 Introduced the chroot command to isolate the filesystem a process "access" to.

- Various technology was introduced up to 2006, like Virtuozzo (which patched Linux in a proprietary ways)

- 2006: Process Containers Launched by Google in 2006 was designed for limiting, accounting and isolating resource usage (CPU, memory, disk I/O, network) of a collection of processes. It was renamed “Control Groups (cgroups)” a year later and eventually merged to Linux kernel 2.6.24.

- 2008: LXC LXC (LinuX Containers) was the first, most complete implementation of Linux container manager. It was implemented in 2008 using cgroups and Linux namespaces, and it works on a single Linux kernel without requiring any patches.

- 2013: Docker Docker used LXC in its initial stages and later replaced that container manager with its own library, libcontainer. Docker offered a way to configure and manage containers, i.e a standard de-facto for this technology. As you see Docker was based on cgroups and LXC, seven-years old technologies

- On September 2014 Google published the first release of Kubernetes

- In 2015 Docker, CoreOS and others founded the Open Container Initiative's (OCI). K8s does not need docker anymore to work, but Docker traction is still strong.

References:

Read More -

NILFS is a log-structured file system supporting versioning of the entire file system and continuous snapshotting, which allows users to even restore files mistakenly overwritten or destroyed just a few seconds ago.

Discussion on Hacker NewsNILFS was developed by NTT Laboratories and published as an open-source software under GPL license, and now available as a part of Linux kernel.

Read More -

My true personal opinion based on what customers asks and what co-worker uses:

- docker , docker-compose is still the dev winner

- Podman is rising but it has no extra feature, because docker support the rootless mode too.

- Ignite – Use Firecracker VMs with Docker images (github.com/weaveworks) Super-fast VM based on container are gaining traction. Driving force are cloud providers, but this idea can eventually be helpful for some service providers.

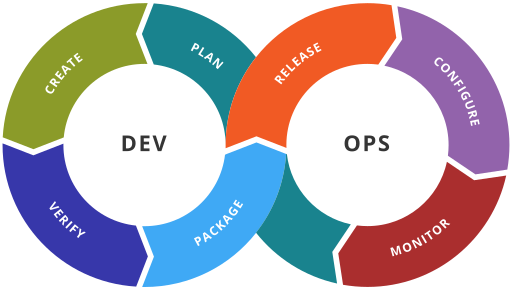

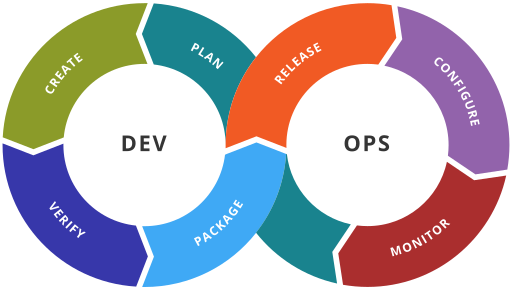

- K8s + Helm keep going K8s is "the" abstraction layer for Cloud providers, to some extent. K8s offers tons of extension points, for automation tools and for cloud providers. The only downside is its heavy lifting: for very simple deploy (less than 3 physicals nodes) it is still an overkill in pricing and management overhead. Also it needs at least a speedy 2-Core CPU to work. Cost rising due to inflation can have a negative impact on "Fat"-K8s solution.

- Jenkins pipeline sucks It is sad to say, but GitHub actions & similia (like GitLab pipelines, Bitbucket pipelines and Cloud provider similar services like AWS CodePipeline) are a winner. Jenkins declarative pipeline are elegant, but its declarative language depends on Jenkins plugins, so you must keep track of them. Also, it is frequent to build groovy library on top of it And when you need to upgrade Jenkins from time to time, you face a lot of refactoring on pipeline syntax, and it is increasing difficult to estimate.

- docker , docker-compose is still the dev winner

-

Hosting a Git repository can be a strong need if you want to keep your projects outside the cloud providers.

Keep in mind security offered by GitHub, GitLab and Cloud providers like AWS, MS-Azure, etc are damn good (often offering 2FA, two factor authentication, for free) , so think twice before deciding to hosting your own git server. It is a good shot if you do not plan to expose it to Internet, otherwise the expertise required to secure it, it is not trivial.

Read More -

Let PiHole play nice with docker-compose

Apr 3, 2022 · 1 min read ·

When you run pihole in a docker container, it could be difficult to build images on the same docker daemon, because docker-compose cannot pass DNS request to another container during build, and normal dns resolution fixes won’t work.

I solved this issue reading this article and configuring my docker daemon to use a public DNS service:

Read More